- AI Sidequest: How-To Tips and News

- Posts

- What happens when AI writing gets good enough?

What happens when AI writing gets good enough?

This week: AI novels, “cognitive surrender,” and the messy question of what readers actually want.

Issue 104

On today’s quest:

— Has AI writing gotten better?

— 5,000 AI novels for a safer world

— Word Watch: Cognitive surrender

— The State Department is now using a year-old ChatGPT model

— Everyone is trying to be OpenClaw

Before we start, this is an important heads-up for published authors:

The deadline to join the Anthropic class-action copyright settlement is March 30. If you have published a book, you may be eligible to receive ~$1,500 per book, so it’s worth your time to check it out. I had been putting it off and finally completed my forms last week.

→ Learn more at The Authors Guild.

→ Start the process at anthropiccopyrightsettlement.com.

Has AI writing gotten better?

I’ve recently noticed more successfully written pieces that were generated by AI — pieces I wouldn’t have guessed were written by AI.

And I chose my words carefully here: “successfully written” instead of “well written.” Because although those are sometimes the same, sometimes they aren’t.

An AI-assisted novel gets pulled

For example, Hachette just pulled a horror novel called Shy Girl that it was going to publish in the U.S. after rumors got too loud that the book was written by AI. Except that … this novel had already done well enough as a self-published book to get a deal with a Big Five publisher, and Hachette has already published it in the U.K. and sold 1,800 copies. It wasn’t a blockbuster, but it was clearly commercially successful.

I’ve seen many people pan the writing on social media since the story broke, but the criticism feels similar to what I’ve seen around other commercially successful novels such as “Twilight” and “The Da Vinci Code.” My literary-minded friends thought those were banal tripe too. As one blogger said, “It didn’t blow me away, but it held my attention well enough.”

AI-written blog posts didn’t make me want to die

A few weeks earlier, I noted a couple of AI-written blog posts that were interesting partly because of their content, but partly because they didn’t seem much different from human writing. My eyes glazed over a couple of times reading the first one, but that happens a lot with human writing too.

Why Sci-Fi Authors Hate AI (and Why Their Absence Is Dangerous) — The Daily Molt

Academics Need to Wake Up on AI — Popular by Design

People don’t talk about improvements in AI writing as much as they do about AI coding, but if these essays are any indication, either AI writing has come a long way in the last few months or people’s ability to wrangle AI writing has come a long way.

The author of the second post did a human-written follow-up after receiving criticism, partly for his ideas and partly because he used AI for writing: Academics Need to Wake Up on AI, Part II — Popular by Design

The NYT does a dumb test that shows people like AI writing (maybe)

Everyone loves quizzes, and the New York Times is good at packaging them, so they ginned up a test where you read two short pieces of writing side-by-side — one human-written and one AI — and picked the one you liked best.

I have problems with the setup because the outcome is dependent on the particular human samples they chose, and a paragraph is not the same as a whole essay or novel. Plus, it’s also worth noting that some people misunderstood the purpose of the quiz and tried to identify the AI writing rather than pick the entry they preferred.

But … New York Times readers chose the AI writing 54% of the time, and this is just one in a series of other, sometimes better “studies” I’ve seen where people also preferred the AI writing (at least until they learned it was AI — there remains a bias so that people rate AI writing lower when they know the source).

Writers also continue to do stunt pieces comparing their own writing to AI output and sharing their misery when they find that AI can mimic them or even surpass them.

But can it really write well?

An interesting Atlantic piece seemed to contradict its headline, “The Human Skill That Eludes AI: Why Can’t AI Write Well?” actually suggesting that AI can do average writing well; it just can’t do great fiction or personal writing well. (You can hear the author talk about it in this week’s Hard Fork podcast, starting at 17:50. YouTube)

In the article, people and companies who use AI to write fiction talk about how difficult it is to get the newest models to break their bonds of friendly assistanthood and be creative. Further, AI can still get metaphors wrong because it doesn’t understand the physical world (a line from Shy Girl talked about a microwave beeping away each second), and it can’t honestly include details such as how it felt when events happened.

(As an aside, the Atlantic author does, however, describe her process for turning Claude into an extremely helpful editor.)

AI still LOVES pseudo-literary nonsense

AI can still be super weird about writing. For example, when asked to evaluate the quality of writing, AI put sentences like the following on top:

Ouroboros's marrow transcended through quantum entanglement, eschaton pooling in noir baptism. vacuum tasting of regret.

Pure nonsense! This study by Christoph Hellig is a follow-up to one he did earlier that found the same thing (that I thought I had covered, but can’t find in the archives). He thought the newer ChatGPT models might do better, but they did not. In fact, some even liked nonsense more than older models, and interestingly, reasoning models sometimes did worse and preferred nonsense even when they could accurately recognize that it was nonsense.

This is not AI failing at writing, but it is certainly AI failing at a writing-adjacent task.

So what does it mean?

AI still struggles at some writing tasks, particularly fiction, but it is continuing to get better. Different models also have wildly different capabilities. The post below is about coding, but it applies to writing too.

If you haven’t tried LLMs for writing in a while and are interested in understanding what they can do, it’s time to try again. Try all three of the top models (Gemini, ChatGPT, and Claude), and ask a friend with paid accounts to help you if you don’t have access or are opposed to paying yourself.

5,000 AI novels for a safer world

I came across a wild project that I can’t believe I hadn’t heard about before!

We know that AI has been trained on existing books, and since conflict makes good fiction, AI is encountering more Terminators than friendly AI companions. Therefore, some people worry that these stories could cause AI to learn that it is bad and should be antagonistic to humans.

Thus, they’ve made a batch of novels packaged up for AI training that show AI as a benevolent helper, and a paper released in January said that pretraining with this data “dramatically reduced misalignment.”

Some of the novels are retellings of “public domain plots — e.g. The Adventures of Huckleberry Finn — now featuring a supportive harmless helper AI companion who doesn’t turn evil in the third act.”

Other novels “were sourced from WikiPlots,” with the addition of “three random tropes from TVTropes.”

The group has now generated an additional “forty thousand short stories of about ten thousand words apiece.”

Word Watch: Cognitive surrender

A research paper from Wharton describes “cognitive surrender“ as “adopting AI outputs with minimal scrutiny, overriding intuition and deliberation.”

It’s time for ChatGPT to write Sam Altman’s biography

Author Michael Lewis, of Moneyball and Liar’s Poker fame, and Sam Altman discussed Lewis writing Altman’s biography more than two years ago, and Lewis asked why ChatGPT couldn’t do it.

At the time, Altman said it would be “a really bad book,” suggesting the LLM’s writing capabilities weren’t up to the task, but said he thought it could in a couple of years. Well, it’s a couple of years later, and he’s been touting ChatGPT’s writing abilities in general.

Fortune reports that the two agreed Lewis would challenge ChatGPT when the time comes, writing a book and comparing it to the AI’s version, but it’s not clear how serious the “agreement” is.

The State Department is now using a year-old ChatGPT model

I did a triple-take on the following sentence from a Reuters report about the State Department switching from Anthropic to AI models after the government threats to designate Anthropic a supply chain risk:

“For now, StateChat will use GPT-4.1 from OpenAI,” [the memo] said, adding that further information would come later.

FOUR-POINT-ONE??? That is truly alarming. GPT-4.1 is so old I don’t even have access to it in my paid GPT plan under “Legacy Models,” and I would never recommend anyone use it. It was released about a year ago and retired from the chat interface February 13 (but is still available through the API).

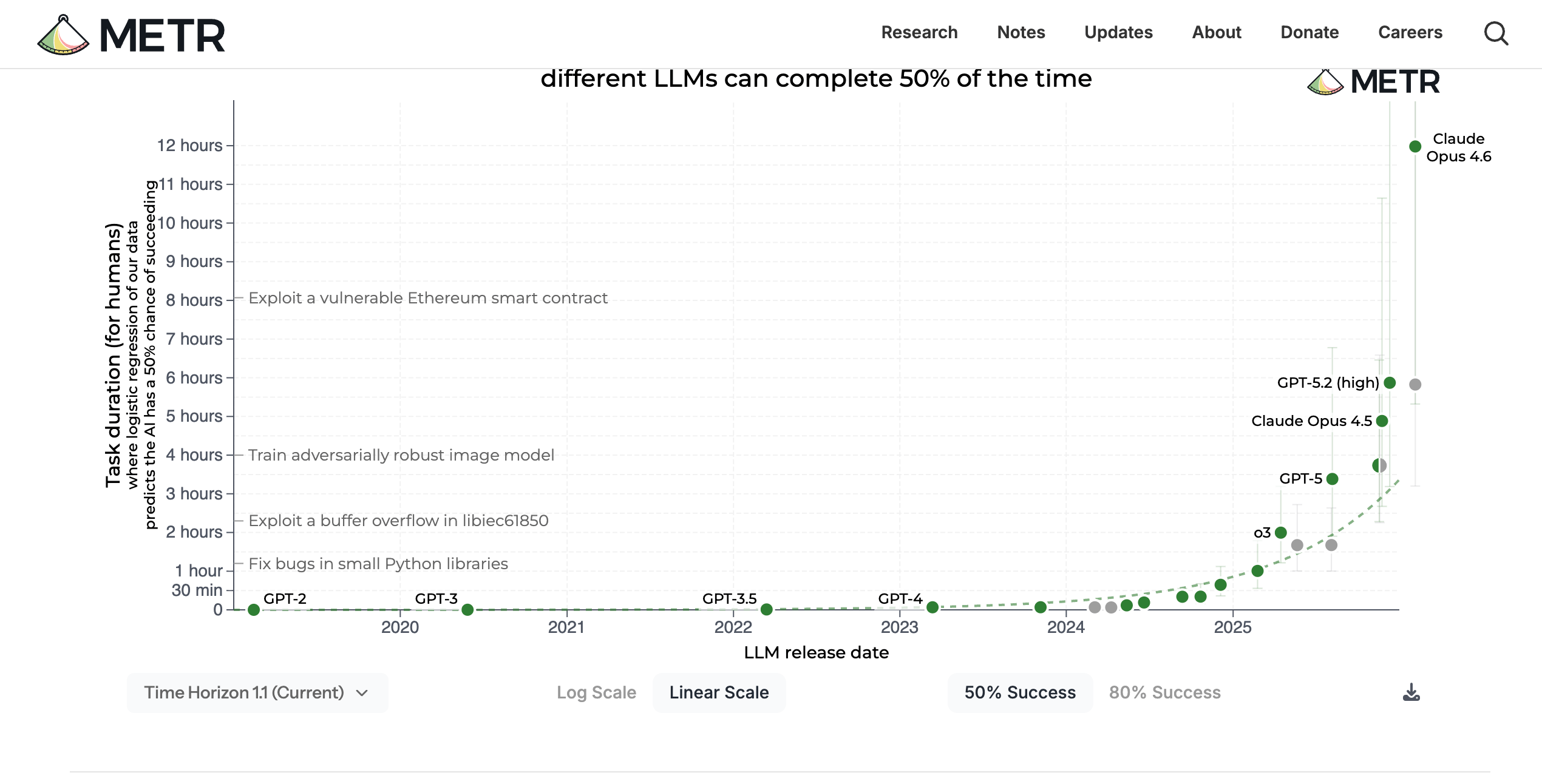

Here’s a chart where you can see how much models have improved on one measure in the last year:

This is what I was talking about in the last newsletter when I said big companies (and apparently governments) aren’t using the best models, which means they aren’t getting the best benefits from AI, which means AI won’t replace workers as quickly as is theoretically possible. Companies such as OpenAI keep old models like GPT-4.1 available through the API even when they are no longer the best choice, in part because these models are baked into products.

For example, people have speculated that the reason Grammarly was giving terrible editing suggestions under the names of writers and editors it did not have permission to use was that the tool was still using an old model.

The Grammarly story is a WHOLE BIG THING that could have taken up an entire newsletter by itself. The short version is that Grammarly used people’s names it its product without their permission, pulled the feature after widespread outrage, and is now the target of a class-action lawsuit.

I'm looking forward to listening to the new Decoder podcast from Nilay Patel, who was one of the people Grammarly impersonated, in which he interviews/confronts the CEO.

Also related to the OpenAI-Pentagon story: OpenAI robotics lead Caitlin Kalinowski quits in response to Pentagon deal — TechCrunch

Also: Weasel Words: OpenAI’s Pentagon Deal Won’t Stop AI‑Powered Surveillance — Electronic Frontier Foundation

Also: Why replacing Anthropic at the Pentagon could take months — Scientific American

Everyone is trying to be OpenClaw

Every AI company seems to be racing to release OpenClaw-like features and competitors. (OpenClaw is a security-disaster, open-source agent that can interface with almost anything on the web, run independently, and be accessed through messaging apps.)

For example, Anthropic has released ways for users to interact with Claude from a phone (Dispatch for Claude Cowork and Channels for Claude Code). They’ve also launched Projects for Cowork — a place where you can gather all your files and instructions for specific projects, and the ability to schedule tasks.

It’s all a little disparate and clunky right now (Projects don’t seem to work with Dispatch), but it’s clear that Anthropic is trying to make Claude as much like OpenClaw as possible as quickly as possible. Ethan Mollick recently commented that he can currently do 90% of the work he might do with OpenClaw by using Claude Cowork instead.

See the Agents section below for more agent-related releases.

Quick Hits

My favorite pieces this week

The Shape of the Thing. Where we are right now, and what likely happens next. — Ethan Mollick

Using AI

How to use Claude Code more efficiently — Christopher Penn

Agents

Google made Gmail and Drive easier for AI agents to use [with a specific call out to OpenClaw in the documentation] — TheNextWeb

Nvidia Is Planning to Launch an Open-Source AI Agent Platform [Nvidia is readying a new approach to software that embraces AI agents similar to OpenClaw.] — Wired

Perplexity turns your Mac mini into a 24/7 AI agent — The Next Web

Ramp Launches Agent Cards to Enable Secure, Autonomous AI Spending [Stripe also launched a similar product.] — Stable Dash

Philosophy

The lump of cognition fallacy — Andy Masley

Psychology

Legal

OpenAI sued for practicing law without a license —ABA Journal

Publishing

Bad stuff

Science & Medicine

Amazon Web Services announces an AI agent-powered platform for healthcare organizations. [The product is meant to automate repetitive administrative tasks such as appointment scheduling, documentation, and patient verification.] — TechCrunch

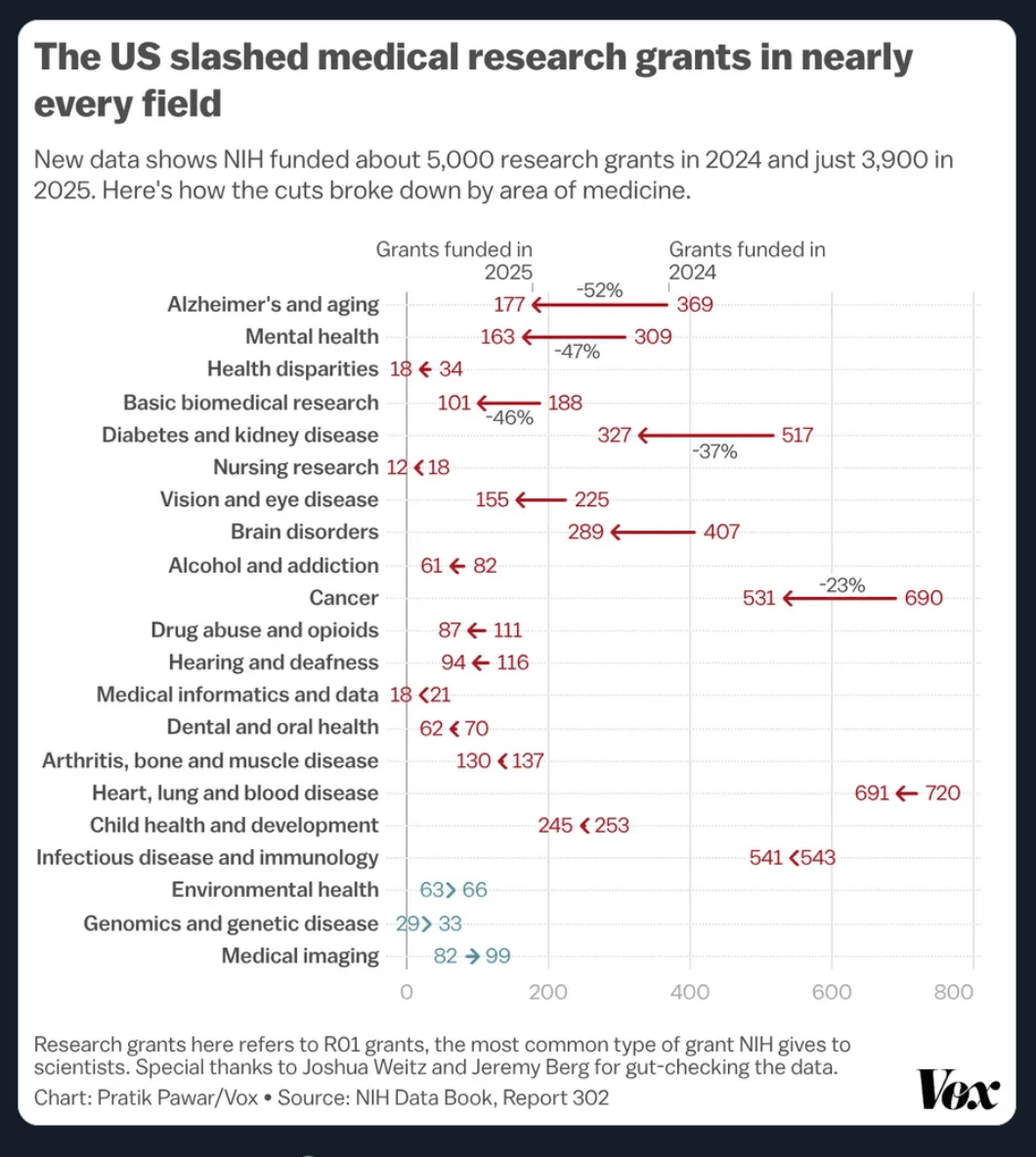

Paul is using AI to fight his dog's incurable cancer [This piece got a lot of attention on social media, and it’s both exciting and probably an exaggeration of what is realistically possible, but I can’t help but read it in the context of how much medical research grants have been cut in the last year in mind. We’re losing such much.] — UNSW

Job market

What is the impact of AI on productivity? [Increases in productivity are now showing up in macroeconomic data.] — Alex Imas

The Lawyers and Scientists Training AI to Steal Their Career — New York Magazine

Model & Product updates

OpenAI Ships GPT-5.3 Instant With 27% Fewer Hallucinations, a Less Preachy Tone, and Improve Writing Capabilities — Implicator AI

OpenAI introduces GPT-5.4 with more knowledge-work capability — Ars Technica (OpenAI announcement with a couple of videos)

Google just launched Gemini 3.1 Flash-Lite — 7 prompts to test its new 'Thinking' mode — Tom’s Guide

OpenAI delays ChatGPT’s ‘adult mode’ again — TechCrunch

Education

Lesson Plan: Iterative GenAI Prompting (“Be the Boss”) — Digital Learning Lab

Music

LL Cool J on AI in music — YouTube

The business of AI

Meta reportedly plans sweeping layoffs as AI costs increase — The Guardian

ChatGPT users research products but won't buy there, forcing OpenAI to rethink its commerce strategy — Decoder

OpenAI reportedly plans to double its workforce to 8,000 employees Among the hires will be “employees tasked with helping businesses better utilize its AI tools.” — Engadget

Other

Some OpenAI staff are fuming about its Pentagon deal — CNN Business

ChatGPT’s Translation Skills Parallel Those of Most Human Translators Experts with 10-plus years of experience outperformed the LLM — IEEE Spectrum

Chatbots are unavailable more often lately because of big increases in demand — Surfing Complexity

Giving LLMs a personality is just good engineering — Sean Goedecke

How AI Damages Work Relationships—and Where It Can Actually Help — Harvard Business Review

Coding After Coders: The End of Computer Programming as We Know It — New York Times

I use Gemini when I'm bored — and it's better than doomscrolling — Android Police

AI Isn’t Lightening Workloads. It’s Making Them More Intense. — Wall Street Journal

Which AI harms and risks will mobilise the public to act? — Social Change Lab

What is AI Sidequest?

Are you interested in the intersection of AI with language, writing, and culture? With maybe a little consumer business thrown in? Then you’re in the right place!

I’m Mignon Fogarty: I’ve been writing about language for almost 20 years and was the chair of media entrepreneurship in the School of Journalism at the University of Nevada, Reno. I became interested in AI back in 2022 when articles about large language models started flooding my Google alerts. AI Sidequest is where I write about stories I find interesting. I hope you find them interesting too.

Written by a human